I review all the books I read, here is a selection of my favorites that I read in 2023:

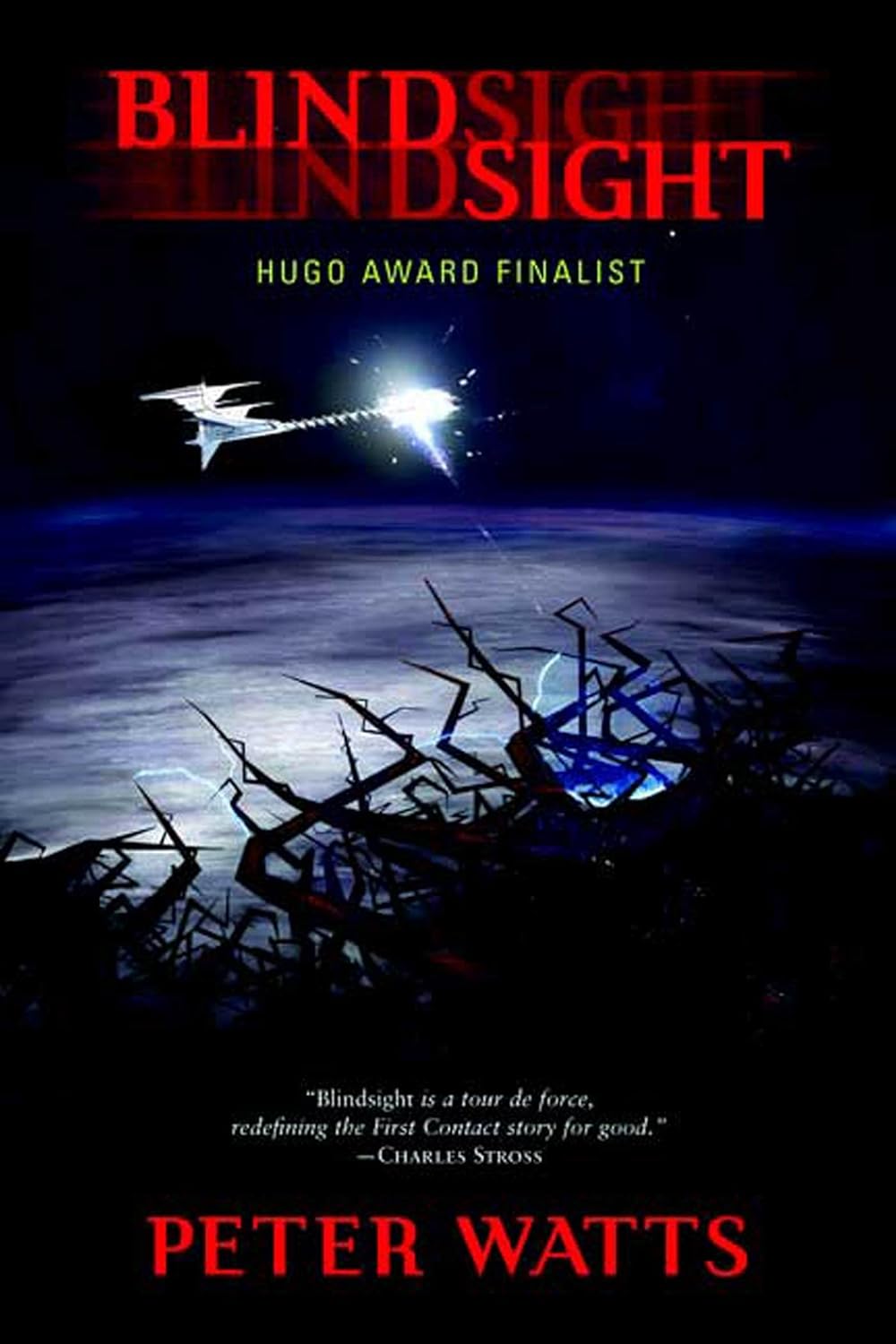

Blindsight by

Blindsight does a great job of exploring the nature of consciousness and intelligence. Watts keeps the tension high and the plot moving quickly in this thought-provoking sci-fi novel. My favorite book of the year!

Blindsight is a hard sci-fi novel about first contact with aliens in the near future. A crew of four transhumans and a vampire are sent on a spaceship to investigate an anomaly in the solar system after a swarm of alien probes scan Earth.

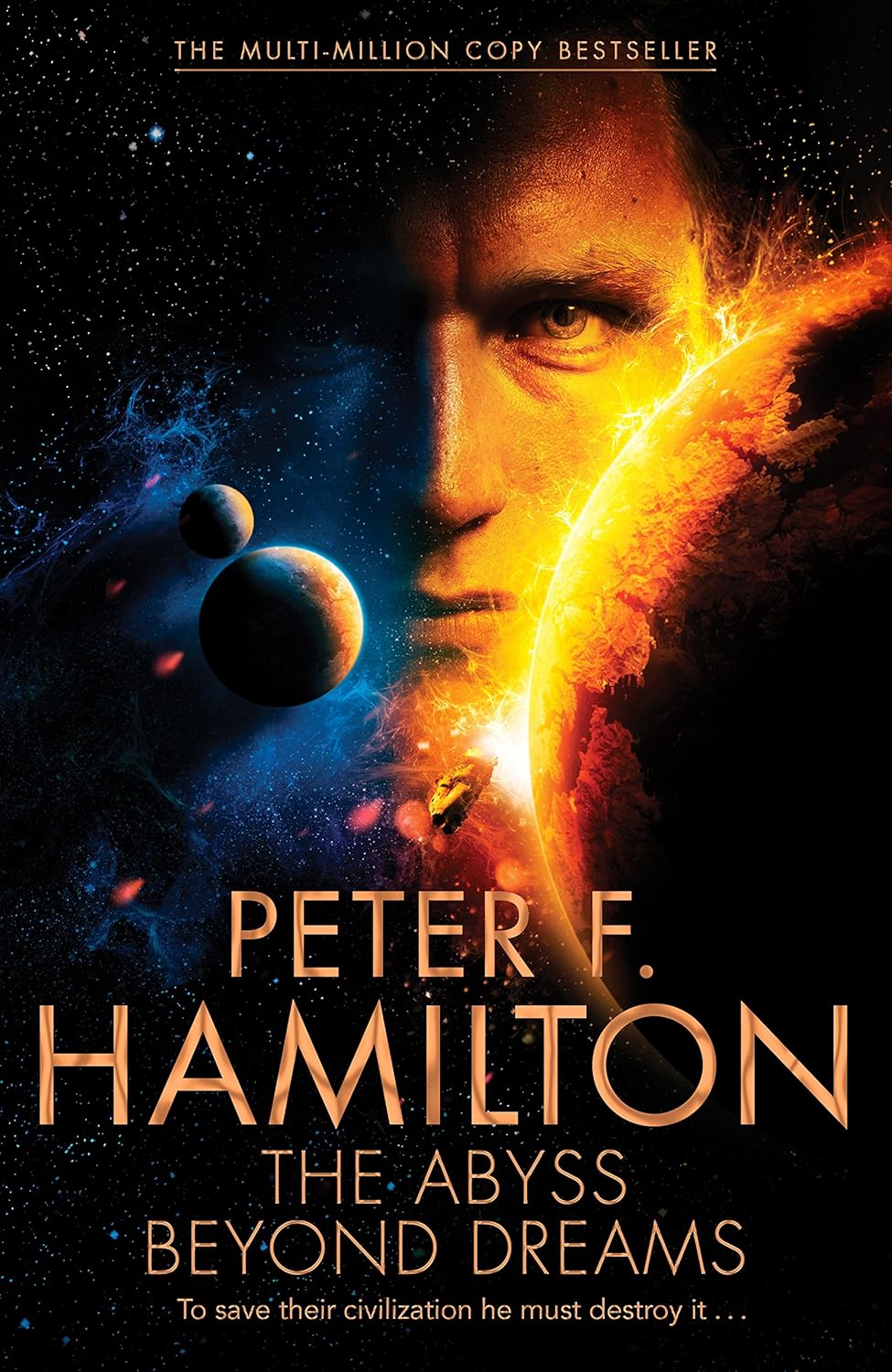

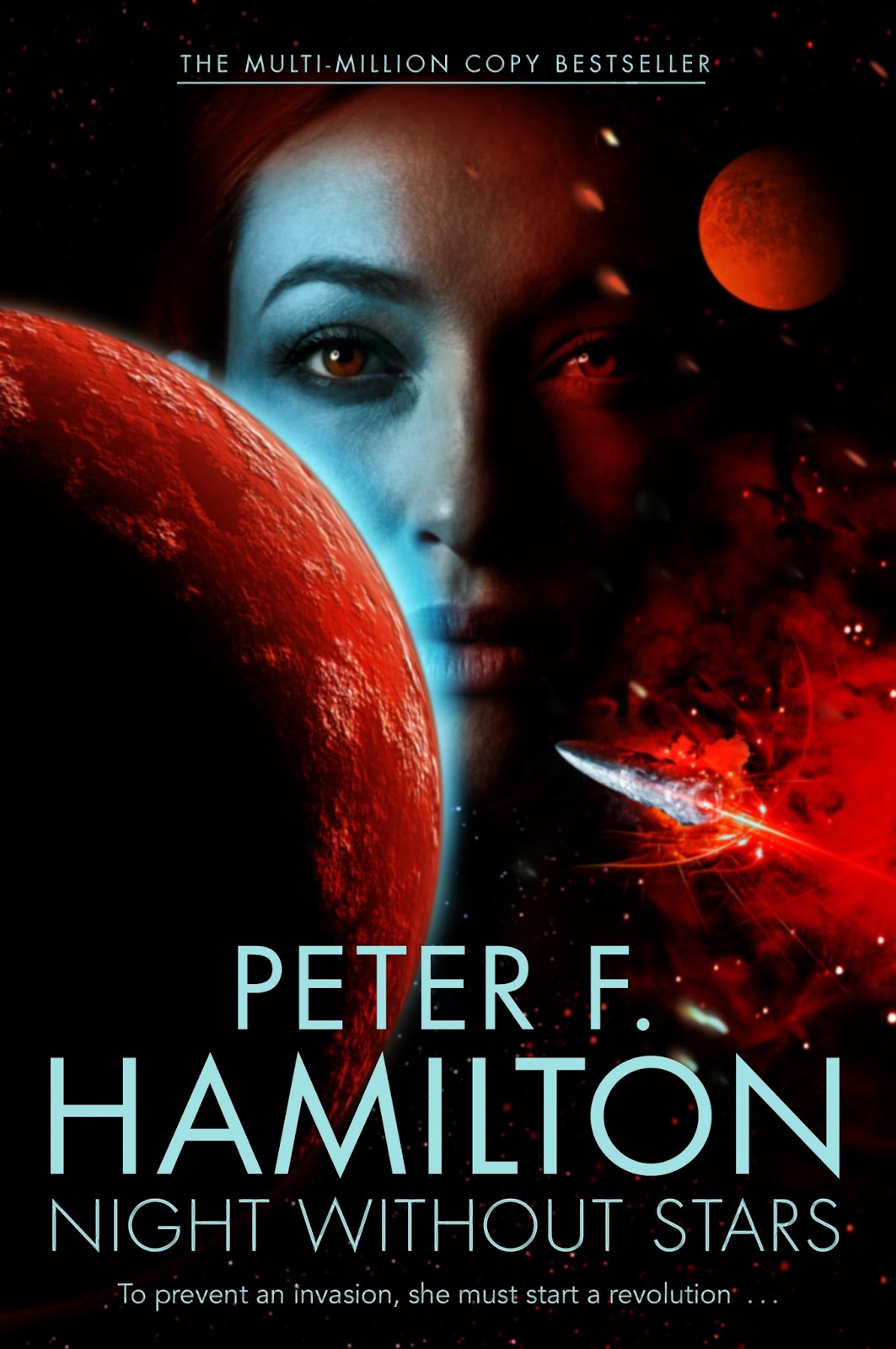

The Chronicle of the Fallers by

Hamilton is known for his space opera, but The Abyss Beyond Dreams is more urban fantasy set during the Russian Revolution (in space) and Night Without Stars is a thriller set during the Cold War (again, in space). Both feature Commonwealth citizens with special knowledge as “Outside Context Problems”, pulling the stories into science fiction territory.

The Abyss Beyond Dreams starts off The Chronicle of the Fallers, another series in Hamilton’s Commonwealth universe. Though billed as space opera, it often reads more as urban fantasy since most of the story occurs on the planet Bienvenido inside the Void where steam engines are their most advanced technology.

Night Without Stars is the second book in the Chronicle of the Fallers. It is action packed, with great pacing, and complex characters. It is my new favorite Hamilton book.

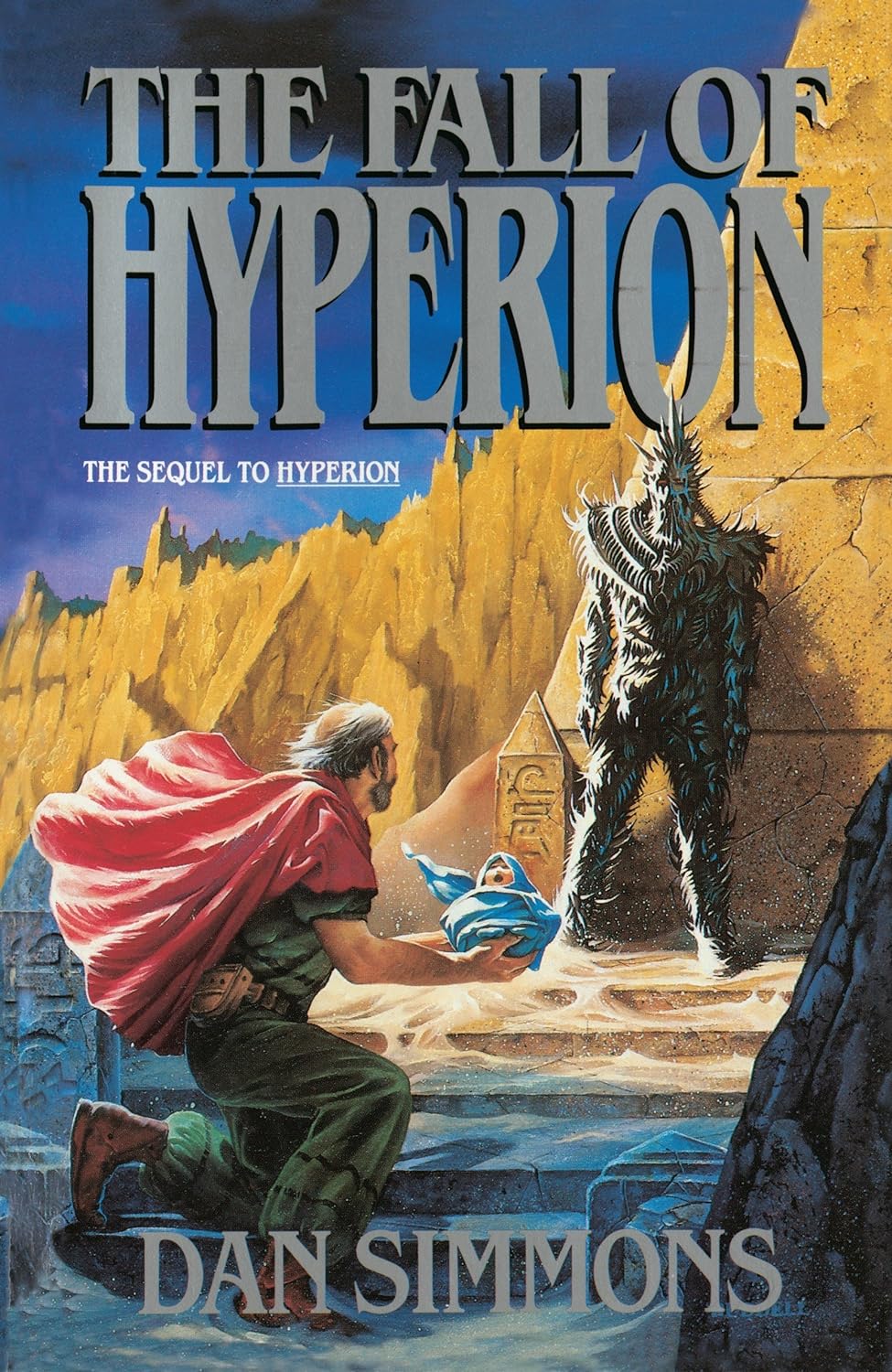

The Fall of Hyperion by

I enjoyed the sequel to Hyperion the most of the two books because it tied the personal story of the pilgrims to a much broader galactic conflict. Interestingly, you can see a lot of ideas in the Hyperion Cantos that Hamilton later adopted in his Commonwealth Saga including wormholes, a breakaway-but-helpful AI, and different factions of scheming AI who either want to eradicate the humans or uplift them.

The Fall of Hyperion is a sequel that outshines it’s predecessor. It is everything I was expecting from Hyperion and more! A true masterpiece.

The Commonwealth Saga by

Epic space opera with a massive cast of characters and incredible pacing.

I couldn’t put Pandora’s Star down! It is a sci-fi book that reads more like a thriller. There were always new mysteries that just a few more pages promised the answers to.

The sequel to Pandora’s Star, Judas Unchained continues right where the last one left off, but with the action ramped up to 11. The various storylines and loose threads come together one by one until it’s the good guys racing against the bad guys for the fate of the universe.

Serpent Valley by

1980s mech sci-fi re-imagined for the 21st century. Warren’s self-published series takes a few books to really find its feet, but once it does, it’s a quick, fun, nostalgic read. The third book, Serpent Valley, exemplifies the series.

Serpent Valley, the third book in the War Horses series, is another quick, action-packed read—but without the flaws holding back its predecessors. Easily my favorite of the series so far!